It has nothing to do with ‘sensitive’.

All laser controllers operate internally using base 2 - so does your computer. To use inches requires multiple conversions from inch to mm and back, with rounding errors in every direction.

Say, internally, an app uses a byte to represent the low-order part of a decimal (because neither computers nor controllers ‘understand’ the concept of a decimal or a fraction or a degree - whole numbers only in binary - https://www.electronics-tutorials.ws/binary/binary-fractions.html - which, again, requires calculations both ways, discarding least-significant bits along the way.

Do that enough times and it can make a measurable difference to the workpiece.

This doesn’t happen in metric units.

So, a simple question - do I use the system of units that loses accuracy or the one that doesn’t?

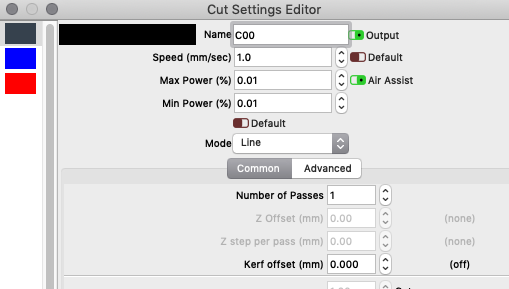

@LightBurn can give us a definitive indication of how many significant digits, so we can work out how much gets discarded.

There has been some real-world instances where using one or the other has resulted in a significant, observable phenomenon.

Back in the day, when I first started work, my boss told me about his early work. He designed and built analog tracking systems for military rockets, guns and cannon.

It was all feedback loops and mercury relays and vacuum tubes. They didn’t care about metric or inch, their stuff was all in miles and degrees, so calculated on base 60.

He spanned the period from the 1930s to the 1980s, encompassing ‘the digital revolution’ and was the smartest Texan I have ever known.

My job was, initially, what they called FEP programming and design - the Front End Processor was the part of a computer that took signals in - digital and analog - and converted them to digital for the computer to work on. A DAC, more or less.

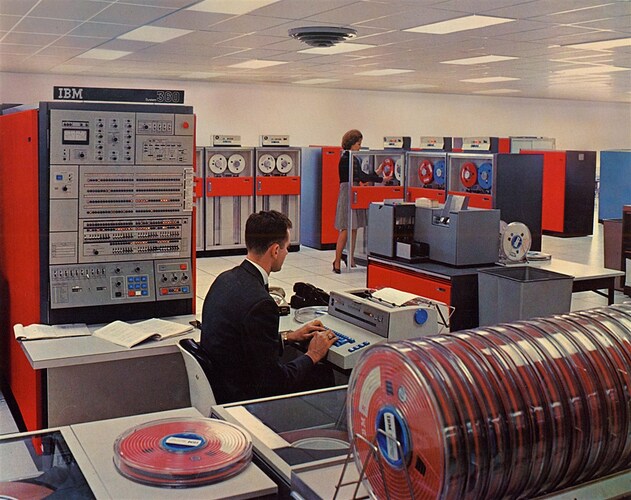

This is the 8-bit, 131,072 byte computer in question. There were no girls. We didn’t wear suits. We had long hair, wore sandals, board shorts and Mao/Che t-shirts.

I just couldn’t get my analog signals right, no matter what I did.

He, in a very Strangelovian manner (Dr. Strangelove or: How I Learned to Stop Worrying and Love the Bomb) taught me how to stop worrying and learned to love analog.

I was chasing perfect, and I was the enemy of good.

My processor was ‘hunting’, up and down the analog signal and my results were spurious. He, coming from a military targeting background had the military mindset that it really didn’t matter what you did, as long as you hit the target or knew what was going to be hit.

But, he and they were looking at accuracy in the region of tens of yards. A battleship makes a big target, so does a factory. A fighter jet, less so, and it was the high-speed planes that spurred on the revolution to digital accuracy.

So while 0.005" might be half a human hair, braid enough of them together and you can jam up the works of a 1052 Data Communications System, shutting down the works.

Pass a file around enough between America and the rest of the world and it can introduce errors big enough to matter.

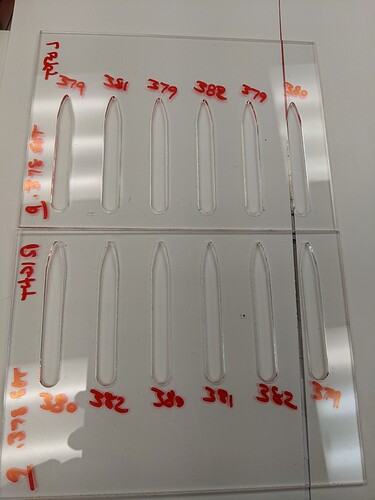

This happened to me yesterday - my friend and I swap designs - she in Philadelphia uses inch, I use metric. Her file was sized for ‘3 mm’, but it really was 1/8" and I cut it on 3mm and it was sloppy as heck. I fixed it, updated the file, cut a sample, photographed it and send it back to her and she cut it and it wouldn’t fit - which is, of course, completely different to rounding errors, but it is probably more relevant. A 0.175mm difference in dimension, every 3mm, results in a completely different object.

Using the Roman uncia, using fractions, in the 21st century, on digital machinery is counter-productive.