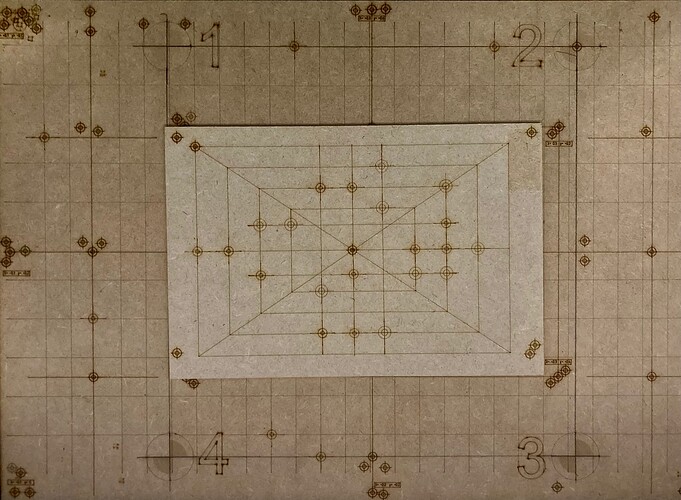

I’m trying to install an Arducam 64MP now. This one is impressive!

The camera has to plug into interface hardware like a Raspberry Pi 4/5, and you install software to stream it to the PC. Ethernet is supposed to offer the lowest latency, an issue when we’re talking about an imager of this size.

The RPi seems like an expensive middle component, but it offers something pretty useful. It has low-level control over the camera’s autofocus lens, and AGC. It could even do the flattening on the RPi and offload that from LB. There’s a whole lot of potential with OpenCV library.

The single biggest prob I found with using a bed camera is the camera’s internal AGC (brightness control) which sums up the brightness level of the whole field of view. Probably weights the influence of the image center higher, too.

This is bad because most of my FOV in normal use is our jet-black steel honeycomb. So it sees 90% jet black and 10% light plywood and turns the gain up to make the different shades and detail of the black honeycomb show up, but that’s raising the gain WAY too bright for the plywood, which can appear washed-out. The edges will be blurry, and lines engraved it in would be lost.

You’re probably thinking “just adjust your lighting”. It has little effect. More light, less light, they affect the brightness of honeycomb and stock equally. If you see the light-colored work totally washed out by over-AGC, you dim the room lights, then the camera again sees black but even dimmer honeycomb on 90% of the FOV, adjusts the AGC to counter the lower light condition as per its design principle, and once again the light-colored work on 10% of the FOV is again washed out just the same. Shading with a neutral density filter will give a similar result.

Adjusting the brightness/contrast in LB was of little use. The information just isn’t there in the stream. An overexposed, washed-out image of the work has only 100% white or near to it across the whole thing and a small margin around it, and the original edges are lost. There’s nothing to recover with LB. And the regular USB cameras I’ve used do not allow the PC to command the AGC to the “right” point

I really wouldn’t want autofocus normally, since I’d expect it to erroneously lock onto the honeycomb if it’s most of the FOV. Or, it could lock on the gantry. But since the RPi has low level hardware control, it can disable the autofocus and lock it at an arbitrary setting.

This would be the best- and seems achievable, OpenCV software running on the RPi tried to recognize honeycomb areas. On my laser, it would be easy, that stuff is really black even after being pressure-washed.

Once identified as honeycomb, that s pace would be assigned zero weight for AGC and autofocus adjustment. So it will normalize the AGC for just “the work” and let the honeycomb fall out of the new dynamic range. It would be lost in blacker blackness. But fine, don’t care.

Autofocus could be helpful for tweaking the focus. Again, the RPi has direct control, so the range of focus it tries can be limited You’d want to lock its range to the camera’s distance to the place where your material top surface is in focus, and +/- a couple centimeters to focus.

I see the fundamental element here is being able to detect honeycomb vs “work”.