Hello,

I have been lurking this forum (along with several others) from time to time when I was trying to solve issues on my own, and most often than not I would find a solution.

I have finally reached a point where no search would help answer my question and seeing that this community has a lot of users who spare no keyboard, I decided to turn here.

So after short introduction, here goes my first post:

I have been using 80W tube and 80W power supply.

New tube I got is 90W and manufacturers don’t have 80W tubes anymore.

Looks the same, dimensions are the same, and I can’t tell what changed exactly that it now provides 10W more.

For whatever reason, manufacturers hide detailed technical data and do not keep the old ones, so I cannot compare current and voltage numbers of the old and new tube.

What bugs me is this - to get most out of the tube, while not sacrificing longevity (longevity is imperative) would it make more sense now to use 80W power supply or 100W?

Also, what would happen if I were to use lets say 130W power supply?

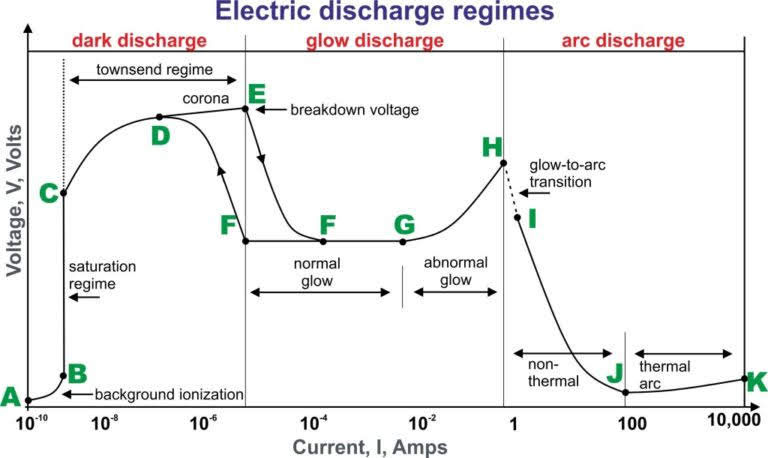

When using PS with higher power than tube rated power, I’ve seen posts where people don’t get any beam output when using less than 20% or over 80% power in settings. *1

Everybody keeps talking about current and how stronger PS will shorten tube life if used with more current than the tube is designed for to handle. I get that.

Power is voltage times current.

What happens when a tube is rated for lets say 24mA (for long life), 19kV ignition voltage and 17kV working voltage, and it gets connected to a PS that provides lets say 40kV and 36kV working voltage, while at the same time using 24mA or less?

It should generate more power, but I am inclined to think that it comes under a cost. How would it affect the tube long term?

Has anyone actually tested and played with this?

*1 Seems to me that it is voltage related and I’ve never seen anyone discuss this.